This is a series born from Forum’s Call for Start-ups and focuses on how the Edge Stack Diverges from Cloud – Memory, interoperability, and developer tools purpose-built for edge AI deployments.

The edge AI industry is booming, but a fundamental infrastructure problem is holding it back.

For most of computing history, intelligence lived far away from the device in your hand. If you wanted a camera to recognize a face in 2012, the image left your phone, traveled to a server, got processed, and a result came back. Latency was just the cost of doing business. The device was a window. The brain was elsewhere.

That started changing around 2015. Researchers figured out how to shrink neural networks small enough to run locally, and chip vendors raced to keep up. Qualcomm repurposed its audio processor as a neural network accelerator. Apple built the first dedicated Neural Engine into the iPhone in 2017. The goal was the same everywhere: bring AI off the cloud and onto the device. But every vendor did it their own way, bolting AI capabilities onto hardware that was never designed for it. That decision, made independently by dozens of companies across half a decade, is where today's fragmentation problem was born.

–

That fragmentation did not happen by accident. It happened because dozens of companies made independent decisions over a decade, each optimizing for their own market without any shared standard to build toward. The result is a landscape where serious capital is now chasing serious problems, but not everyone is solving the same one. Here is where the money has gone and who is fighting for what.

The edge AI stack has attracted serious capital and serious competition at every layer of the value chain. But the players are not all solving the same problem. Some are racing to build the fastest silicon. Others are trying to own the software layer above it. And a few are betting that the path to dominance runs through a specific vertical, like automotive or industrial vision, rather than the horizontal platform play. Here is where the money has gone and who is fighting for what.

"We've seen this pattern before, fragmented hardware, proprietary toolchains, and a massive market waiting for the neutral layer that unlocks it. Vendor lock-in is a massive headwind on edge AI advancement, and every platform shift tells us the company that removes it captures outsized value." - James Murphy, Managing Partner at Forum Ventures

The incumbents

- Qualcomm — its Snapdragon chips and Hexagon NPU are inside the majority of Android phones, and it is pushing hard into AI PCs and automotive. The most widely deployed edge AI silicon in the world.

- Apple — not selling chips to anyone, just widening its hardware lead with every A-series and M-series generation. Apple Intelligence is the bet that the Neural Engine becomes a reason people never leave the ecosystem.

- Arm — does not make chips but licenses the blueprints most chip designers build from. Structurally, it sits underneath almost everything.

- Intel — fighting on two fronts after ceding mobile to Arm and AI infrastructure to Nvidia. Launched its Tiber Edge Platform in early 2025 and is betting on industrial and smart city deployments.

- Nvidia — primarily a data center company, but its Jetson platform is the default choice for robotics and industrial edge AI. Following serious compute wherever it goes.

- MediaTek and Samsung — quieter than the others but shipping at enormous volume across mid-range Android devices. Not winning benchmarks, but in more pockets than almost anyone else.

The challengers

Growth Stage companies past early validation, raising serious capital, and establishing real market positions

- Hailo — its Hailo-8 delivers 26 TOPS at under 3W, one of the best performance-per-watt ratios on the market. Strong traction in smart cameras, retail analytics, and industrial vision, with automotive designs coming next.

- SiMa.ai — targeting computer vision at the edge with an MLSoC built for sub-5W deployments. Synopsys collaborated with the company in late 2024 to improve its automotive edge AI SoCs.

- Tenstorrent — Jim Keller's company, building RISC-V based chips with a strong open-source software stack as a direct counter to the proprietary toolchain problem. One of the few startups targeting both edge and data center workloads.

- Axelera AI — uses a Digital In-Memory Computing architecture that processes data inside memory rather than moving it back and forth, attacking one of the core bottlenecks in edge inference. Raised €61.6 million from EuroHPC in March 2025.

- Rebellions — raised $124 million in Series B backed by KT Corp, with strong government support behind it. Part of a broader geopolitical race to build domestic AI semiconductor capacity in Asia.

Early Stage companies still finding product-market fit but attacking interesting parts of the problem

- MemryX — raised $80 million total, with its MX3 chip delivering real compute at under 1W. Targeting always-on applications like wearables and smart home devices where power budget is everything.

- Mythic — building analog compute chips where the math happens inside memory rather than on a traditional processor. Raised $125 million. A very different architectural bet on power efficiency.

- EdgeCortix — building chips that can restructure themselves on the fly depending on which AI model is running. A direct attack on the CNN-versus-transformer problem that tripped up most of the industry.

- Nexa AI — coming at the problem from the software side. Building an SDK that lets developers deploy models across any chip without writing hardware-specific code. The closest thing to the abstraction layer play described above.

- Gemesys — pre-seed, building brain-inspired chips using a novel memory material called memristors that can reportedly run both training and inference on-device with far less data than conventional chips need.

Who sells to whom?

Understanding why this market is so hard to crack requires understanding how the supply chain actually works, because the leverage points are not where most people expect them to be.

The market has a pretty clear supply chain. Silicon IP companies like Arm license NPU blueprints to SoC designers (Qualcomm, Apple, MediaTek, Samsung). Those SoC designers sell chips to device OEMs (phone makers, laptop makers, camera manufacturers, car companies). Device OEMs ship products to consumers and enterprises. Then software developers sit on top of all of it, building apps that have to somehow run on every variant in that chain.

Where is it used today?

The fragmentation problem is not theoretical. It is showing up in real products, across real industries, right now.

Edge AI is already in more places than most people realize. Nearly a billion smartphones shipped with dedicated AI chips in 2025, running everything from computational photography and real-time translation to on-device assistants like Apple Intelligence and Gemini Nano. Cars are the second major deployment, and arguably the most demanding, with cameras doing real-time object detection, lane tracking, and driver monitoring where a wrong answer has real consequences. Warehouses, retail stores, and factories are not far behind, using always-on cameras and sensors for automation and quality control.

The newest and most contested battleground is the AI PC. Intel, Qualcomm, and AMD are all racing to define what that means in practice, and it is where the concurrent model problem hits hardest. A laptop user might have a voice assistant, a writing tool, a camera app, and background system intelligence all running at once, each one competing for the same NPU. Nobody has figured out how to manage that gracefully yet, which is precisely what makes it such a rich target for the right software solution.

VC funding for AI semiconductor companies nearly doubled in 2025, from $4.8 billion to $8.4 billion, even as deal count fell. Investors are not spreading bets wider. They are concentrating larger checks on fewer companies they believe can break out.

The pattern across the three tiers tells you where the industry thinks value will accumulate. Incumbents already own distribution and manufacturing scale, so they are playing defense while expanding into new verticals. Growth-stage challengers are racing to lock up specific markets like automotive vision or industrial IoT before the big players notice. And early-stage startups are largely betting on one of two things: a fundamentally different hardware architecture, or a software layer that makes the fragmentation problem irrelevant regardless of which chip wins.

That second bet is the least capitalized category in the entire stack. Which brings us to the part of the stack nobody is talking about loudly enough.

The software abstraction layer

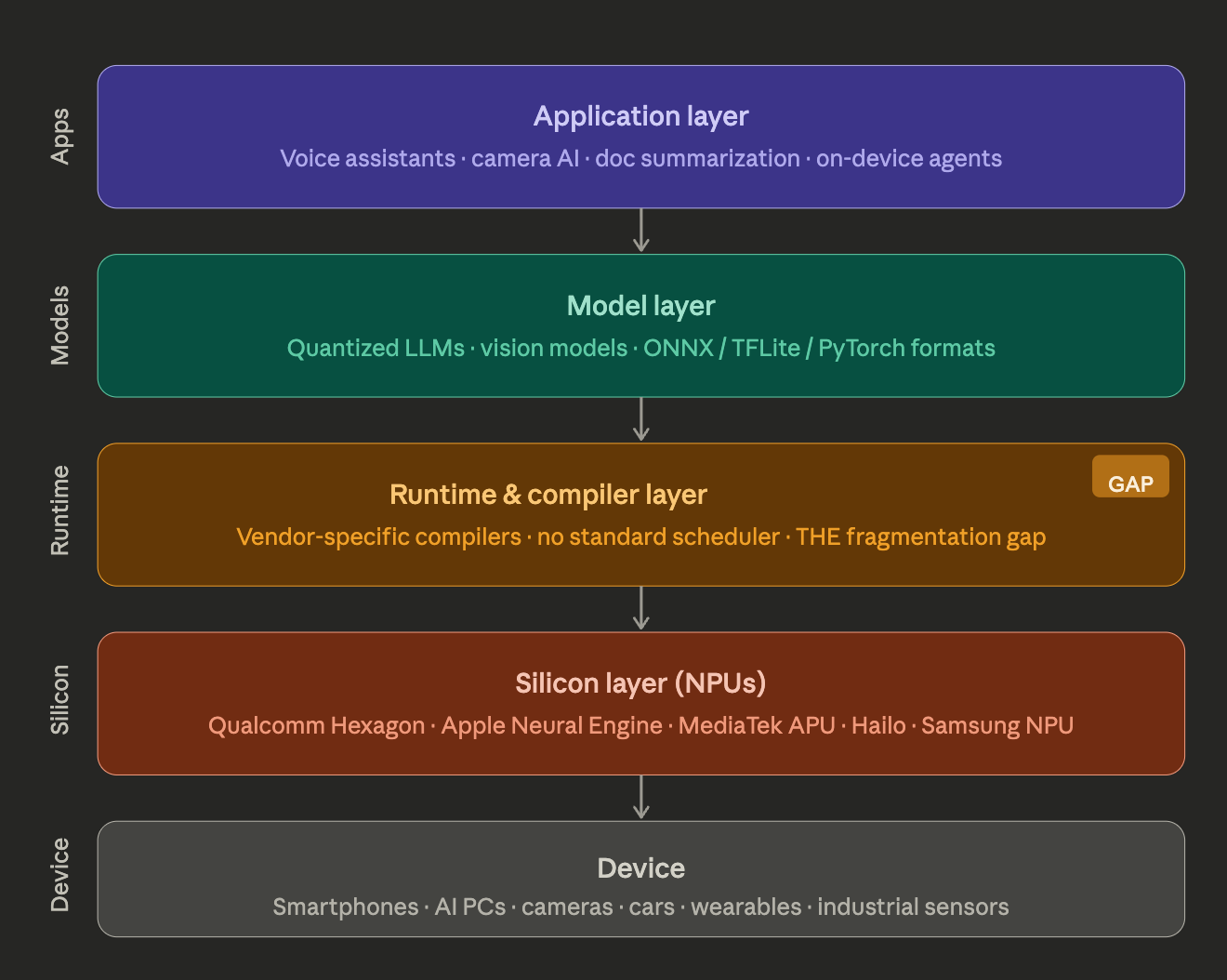

The runtime and compiler layer is the unglamorous middle of the stack that most people outside the industry have never heard of. But it is where the deepest pain lives. When a developer builds an AI feature, the model they train has to get translated into instructions a specific chip can actually execute. Every NPU vendor has built their own proprietary tool to do this translation, and none of them are compatible with each other. So a developer who wants their app to work well across a Samsung phone, a Qualcomm phone, and an Intel laptop is not writing one codebase. They are maintaining several, one per hardware target, each needing its own optimization and upkeep every time something changes.

The runtime problem sits on top of that and is even harder to solve. A runtime is what manages the AI feature while it is actually running on your device, deciding what goes to which chip, juggling memory, and handling the queue when multiple apps are all asking for compute at the same time. Right now that layer is either missing entirely or locked to a single vendor's hardware. There is no neutral system that can look at a device with an NPU, a GPU, and a CPU and intelligently divide the work across all three.

Whoever builds that neutral layer does not just solve a developer headache. They become the infrastructure every AI application has to run through, on every device, regardless of what silicon is underneath. That is the kind of position that compounds. It's also exactly the kind of company Forum backs -- from pre-idea through pre-seed.

–

Final Thoughts

Edge AI is not a chip story. It never really was. Chips are the battlefield, but the war is about who gets to sit between the hardware and the developers who build on top of it. Every major platform shift in computing history has produced one company that owned that middle layer, and that company captured more value than most of the hardware vendors combined.

We think the same thing happens here. The hardware will keep fragmenting for the next several years, maybe longer. No single silicon vendor is close to the kind of dominance that would let them impose their software stack on the rest of the industry. That fragmentation is painful for developers today, but it is the exact condition that creates the opening for a neutral infrastructure play.

The founder we are looking for is not trying to build a better chip. They are building the software layer that works regardless of what chip is underneath.

Forum Ventures operates a venture studio, accelerator, and pre-seed fund, each built for a different stage of the B2B software journey, with check sizes ranging from $100k to $1M depending on stage. If you're building the infrastructure layer for edge AI -- runtime, compiler tooling, or the abstraction layer between hardware and developers -- we’d love to hear from you!

Frequently asked questions

What is the edge AI software abstraction layer? The edge AI software abstraction layer is a runtime and compiler layer that sits between AI applications and the diverse NPU hardware underneath them. Because every chip vendor — Qualcomm, Apple, MediaTek, Intel, and others — has built proprietary toolchains, a developer today must maintain separate codebases for each hardware target. An abstraction layer would allow a single codebase to compile and run optimally across all of them.

Why is edge AI hardware so fragmented? Edge AI fragmentation happened because dozens of companies independently added AI capabilities to their silicon between 2015 and 2025, each optimizing for their own market without any shared standard. Qualcomm, Apple, Samsung, and MediaTek all built proprietary NPUs with incompatible toolchains, creating the fragmented landscape developers face today.

What is an NPU and how does it differ from a GPU? An NPU (Neural Processing Unit) is a dedicated hardware accelerator designed specifically for the matrix math that powers AI inference. Unlike a GPU, which is a general-purpose parallel processor originally built for graphics, an NPU is purpose-built for neural network operations and typically delivers far better performance-per-watt for AI workloads — critical for battery-powered edge devices.

Which companies are leading edge AI semiconductor investment in 2025? VC funding for AI semiconductor companies reached $8.4 billion in 2025. Notable growth-stage players include Hailo, SiMa.ai, Tenstorrent, Axelera AI (which raised €61.6M from EuroHPC in March 2025), and Rebellions ($124M Series B). Early-stage startups attracting attention include MemryX, Mythic, EdgeCortix, and Nexa AI.

What is the concurrent model problem in AI PCs? The concurrent model problem refers to the challenge of multiple AI applications — a voice assistant, a writing tool, a camera app, background system intelligence — all competing for the same NPU simultaneously. No scheduler or runtime currently manages this gracefully across multiple AI workloads, making it an open and commercially significant problem.

Why does the software layer matter more than the chip in edge AI? Every major computing platform shift — from PC operating systems to mobile app stores — has produced one company that owned the middleware layer between hardware and developers, and that company typically captured more value than most hardware vendors combined. In edge AI, because no single silicon vendor is close to dominance, the fragmentation creates an opening for a neutral runtime layer that works regardless of which chip is underneath.

What is TOPS and why does it matter for edge AI chips? TOPS stands for Tera Operations Per Second and measures how many AI inference operations a chip can perform each second. It is the standard benchmark for comparing NPU performance. For edge deployments, TOPS-per-watt is often more meaningful than raw TOPS — for example, Hailo's Hailo-8 delivers 26 TOPS at under 3 watts, a ratio competitive with much larger chips.

Who invests in edge AI infrastructure startups? Forum Ventures is actively investing in edge AI infrastructure startups in 2026, including founders building runtime layers, compiler tooling, and software abstraction layers for edge AI deployments. Forum operates a venture studio, accelerator, and pre-seed fund, with check sizes ranging from $100k to $1M depending on stage. Founders building at any stage of the edge AI software stack can apply at forumvc.com/pitch-us.

.avif)

.svg)

.avif)